Dynamic random gain access to memory is an essential part of all computer systems, and requirements for DRAM– such as efficiency, power, density, and physical application– tend to alter from time to time. In the coming years, we will see brand-new kinds of memory modules for laptop computers and servers as standard SO-DIMMs and RDIMMs/LRDIMMs appear to run out of steam in regards to efficiency, performance, and density.

ADATA showed prospective prospects to change SO-DIMMs and RDIMMs/LRDIMMs from customer and server devices, respectively, in the coming years, at Computex 2023 in Taipei, Taiwan, reports Tom’s Hardware These consist of Compression Attached Memory Modules (CAMMs) for a minimum of ultra-thin note pads, compact desktops, and other little form-factor applications; Multi-Ranked Buffered DIMMs (MR-DIMMs) for servers; and CXL memory growth modules for devices that require additional system memory at an expense that is listed below that of product DRAM.

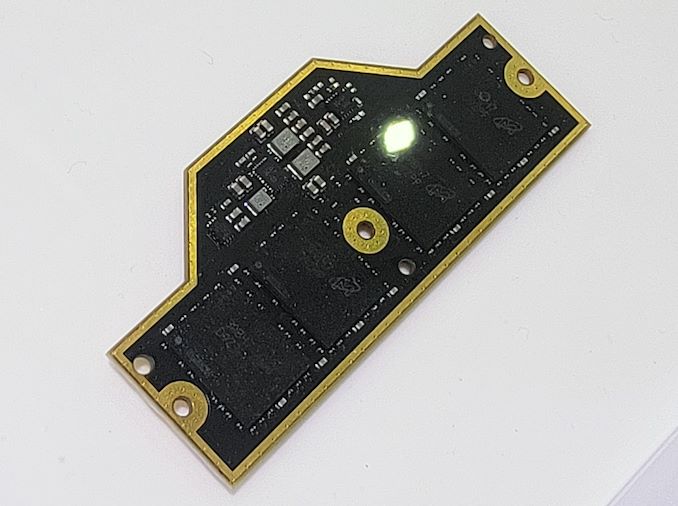

CAMM

The CAMM spec is slated to be settled by JEDC later on in 2023. Still, ADATA showed a sample of such a module at the trade convention to highlight its preparedness to embrace the approaching innovation.

. Image Courtesy Tom’s Hardware(* )The essential advantages CAMMs consist of reduced connections in between memory chips and memory controllers (which streamlines geography and for that reason allows greater transfer rates and reduces expenses), use of modules based upon DDR5 or LPDDR5 chips (LPDDR has actually typically utilized point-to-point connection), dual-channel connection on a single module, greater DRAM density, and decreased density when compared to dual-sided SO-DIMMs.

While the shift to a brand new kind of memory module will need incredible effort from the market, the advantages assured by CAMMs will likely validate the modification.

In 2015, Dell was the very first PC maker to embrace CAMM in its

Accuracy 7670 note pad On the other hand, ADATA’s CAMM module varies substantially from Dell’s variation, although this is not unforeseen as Dell has actually been utilizing pre-JEDEC-standardized modules. MR DIMM

Datacenter-grade CPUs are increasing their core count quickly and for that reason require to support more memory with each generation. However it is difficult to increase DRAM gadget density at a high speed due to expenses, efficiency, and power usage issues, which is why in addition to the variety of cores, processors include memory channels, which leads to a plentiful variety of memory slots per CPU socket and increased intricacy of motherboards.

This is why the market is establishing 2 kinds of memory modules to change RDIMMs/LRDIMMs utilized today.

On the one hand, there is the

Multiplexer Combined Ranks DIMM ( MCR DIMM) innovation backed by Intel and SK Hynix, which are dual-rank buffered memory modules with a multiplexer buffer that brings 128 bytes of information from both ranks that work concurrently and deals with memory controller at high speed (we are speaking about 8000 MT/s in the meantime). Such modules guarantee to increase efficiency and rather streamline structure dual-rank modules substantially. .

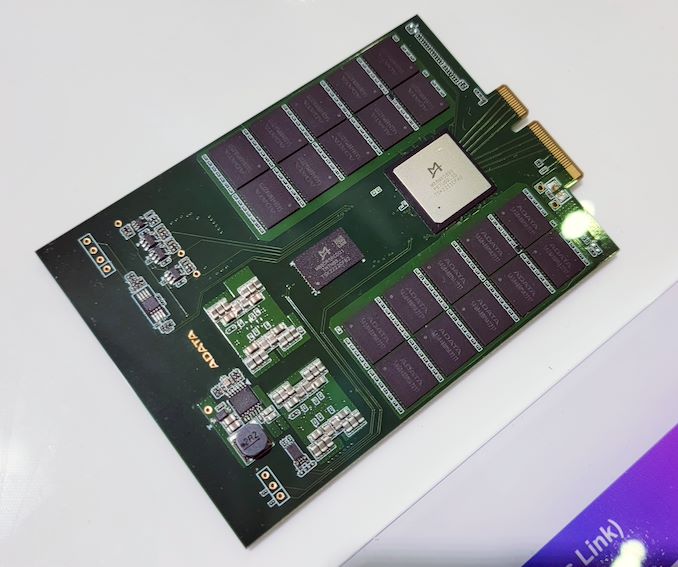

Image Courtesy Tom’s Hardware On the other hand, there is the

Multi-Ranked Buffered DIMM ( MR DIMM) innovation which appears to be supported by AMD, Google, Microsoft, JEDEC, and Intel (a minimum of based upon info from ADATA). MR DIMM utilizes the exact same idea as MCR DIMM (a buffer that permits the memory controller to gain access to both ranks concurrently and communicate with the memory controller at an increased information transfer rate). This spec assures to begin at 8,800 MT/s with Gen1, then progress to 12,800 MT/s with Gen2, and after that escalate to 17,600 MT/s in its Gen3. ADATA currently has MR DIMM samples supporting an 8,400 MT/s information transfer rate that can bring 16GB, 32GB, 64GB, 128GB, and 192GB of DDR5 memory. These modules will be supported by Intel’s Granite Rapids CPUs, according to ADATA.

CXL Memory

However while both MR DIMMs and MCR DIMMs guarantee to increase module capability, some servers require a great deal of system memory at a reasonably low expense. Today such devices need to count on Intel’s Optane DC Persistent Memory modules based upon now outdated 3D XPoint memory that live in basic DIMM slots. Still, in the future, they will utilize memory on modules including a

Compute Express Link (CXL) spec and linked to host CPUs utilizing a PCIe user interface. .

Image Courtesy Tom’s Hardware ADATA showed a CXL 1.1-compliant memory growth gadget at Computex with an E3.S type element and a PCIe 5.0 x4 user interface. The system is developed to broaden system memory for servers cost-effectively utilizing 3D NAND yet with substantially decreased latencies compared to even advanced SSDs.