Software application isn’t produced in one remarkable action. It enhances bit by bit, one little action at a time– modifying, running system tests, repairing construct mistakes, resolving code evaluations, modifying some more, calming linters, and repairing more mistakes– up until lastly it ends up being sufficient to combine into a code repository. Software application engineering isn’t a separated procedure, however a discussion amongst human designers, code customers, bug press reporters, software application designers and tools, such as compilers, system tests, linters and fixed analyzers.

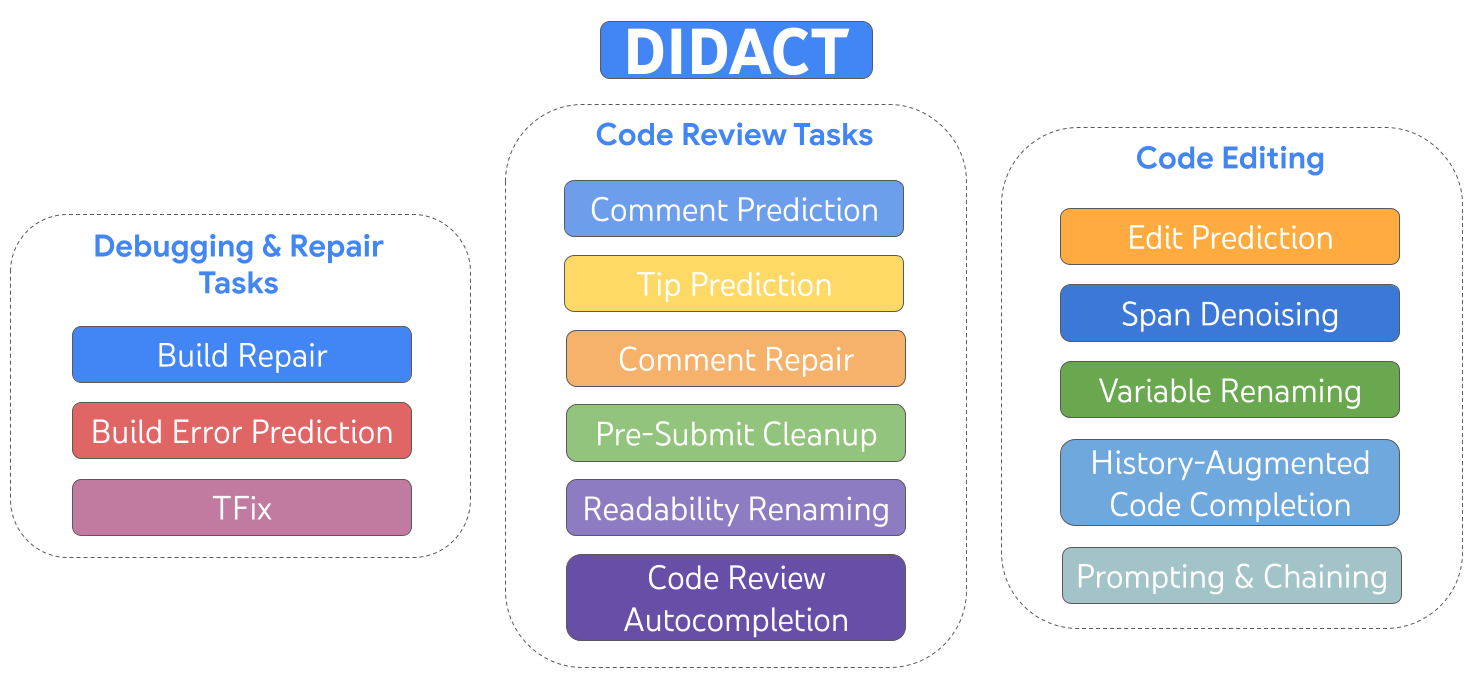

Today we explain DIDACT (Dynamic Integrated Designer ACTivity), which is a method for training big artificial intelligence (ML) designs for software application advancement. The novelty of DIDACT is that it utilizes the procedure of software application advancement as the source of training information for the design, instead of simply the refined end state of that procedure, the ended up code. By exposing the design to the contexts that designers view as they work, paired with the actions they take in reaction, the design discovers the characteristics of software application advancement and is more lined up with how designers invest their time. We take advantage of instrumentation of Google’s software application advancement to scale up the amount and variety of developer-activity information beyond previous works. Outcomes are very appealing along 2 measurements: effectiveness to expert software application designers, and as a prospective basis for imbuing ML designs with basic software application advancement abilities.

|

| DIDACT is a multi-task design trained on advancement activities that consist of modifying, debugging, repair work, and code evaluation. |

We developed and released internally 3 DIDACT tools, Remark Resolution (which we just recently revealed), Construct Repair Work, and Suggestion Forecast, each incorporated at various phases of the advancement workflow. All 3 of these tools got passionate feedback from countless internal designers. We see this as the supreme test of effectiveness: do expert designers, who are frequently specialists on the code base and who have thoroughly refined workflows, take advantage of the tools to enhance their efficiency?

Possibly most excitingly, we show how DIDACT is a primary step towards a general-purpose developer-assistance representative. We reveal that the skilled design can be utilized in a range of unexpected methods, through triggering with prefixes of designer activities, and by chaining together several forecasts to present longer activity trajectories. Our company believe DIDACT paves an appealing course towards establishing representatives that can typically help throughout the software application advancement procedure.

A gold mine of information about the software application engineering procedure

Google’s software application engineering toolchains save every operation associated to code as a log of interactions amongst tools and designers, and have actually done so for years. In concept, one might utilize this record to replay in information the essential episodes in the “software application engineering video” of how Google’s codebase became, detailed– one code edit, collection, remark, variable rename, and so on, at a time.

Google code resides in a monorepo, a single repository of code for all tools and systems. A software application designer generally try outs code modifications in a regional copy-on-write office handled by a system called Customers in the Cloud (CitC). When the designer is all set to package a set of code modifications together for a particular function (e.g., repairing a bug), they produce a changelist (CL) in Review, Google’s code-review system. Similar to other kinds of code-review systems, the designer participates in a dialog with a peer customer about performance and design. The designer modifies their CL to attend to customer remarks as the dialog advances. Ultimately, the customer states “LGTM!” (” appearances excellent to me”), and the CL is combined into the code repository.

Obviously, in addition to a dialog with the code customer, the designer likewise keeps a “dialog” of sorts with a huge selection of other software application engineering tools, such as the compiler, the screening structure, linters, fixed analyzers, fuzzers, and so on

A multi-task design for software application engineering

DIDACT makes use of interactions amongst engineers and tools to power ML designs that help Google designers, by recommending or boosting actions designers take– in context– while pursuing their software-engineering jobs. To do that, we have actually specified a variety of jobs about specific designer activities: fixing a damaged construct, forecasting a code-review remark, resolving a code-review remark, relabeling a variable, modifying a file, and so on. We utilize a typical formalism for each activity: it takes some State (a code file), some Intent (annotations particular to the activity, such as code-review remarks or compiler mistakes), and produces an Action (the operation required to attend to the job). This Action resembles a tiny shows language, and can be extended for freshly included activities. It covers things like modifying, including remarks, relabeling variables, increasing code with mistakes, and so on. We call this language DevScript

|

| The DIDACT design is triggered with a job, code bits, and annotations connected to that job, and produces advancement actions, e.g., modifies or remarks. |

This state-intent-action formalism allows us to catch several jobs in a basic method. What’s more, DevScript is a succinct method to reveal complicated actions, without the requirement to output the entire state (the initial code) as it would be after the action occurs; this makes the design more effective and more interpretable. For instance, a rename may touch a file in lots of locations, however a design can forecast a single rename action.

An ML peer developer

DIDACT does a great task on specific assistive jobs. For instance, listed below we reveal DIDACT doing code clean-up after performance is mainly done. It takes a look at the code together with some last remarks by the code customer (significant with “human” in the animation), and anticipates edits to attend to those remarks (rendered as a diff).

The multimodal nature of DIDACT likewise triggers some unexpected abilities, similar to habits emerging with scale One such ability is history enhancement, which can be allowed through triggering. Understanding what the designer did just recently makes it possible for the design to make a much better guess about what the designer ought to do next.

|

| An illustration of history-augmented code conclusion in action. |

An effective such job exhibiting this ability is history-augmented code conclusion In the figure listed below, the designer includes a brand-new function specification (1 ), and moves the cursor into the documents (2 ). Conditioned on the history of designer edits and the cursor position, the design finishes the line (3) by properly forecasting the docstring entry for the brand-new specification.

|

| An illustration of edit forecast, over several chained models. |

In a much more effective history-augmented job, modify forecast, the design can pick where to modify next in a style that is traditionally constant If the designer erases a function specification (1 ), the design can utilize history to properly forecast an upgrade to the docstring (2) that gets rid of the erased specification (without the human designer by hand positioning the cursor there) and to upgrade a declaration in the function (3) in a syntactically (and– probably– semantically) proper method. With history, the design can unambiguously choose how to continue the “modifying video” properly. Without history, the design would not understand whether the missing function specification is deliberate (since the designer remains in the procedure of a longer modify to eliminate it) or unexpected (in which case the design ought to re-add it to repair the issue).

The design can go even further. For instance, we began with a blank file and asked the design to successively forecast what modifies would follow up until it had actually composed a complete code file. The impressive part is that the design established code in a detailed manner in which would appear natural to a designer: It began by very first producing a totally working skeleton with imports, flags, and a standard primary function. It then incrementally included brand-new performance, like checking out from a file and composing outcomes, and included performance to filter out some lines based upon a user-provided routine expression, which needed modifications throughout the file, like including brand-new flags.

Conclusion

DIDACT turns Google’s software application advancement procedure into training presentations for ML designer assistants, and utilizes those presentations to train designs that build code in a detailed style, interactively with tools and code customers. These developments are currently powering tools taken pleasure in by Google designers every day. The DIDACT technique matches the excellent strides taken by big language designs at Google and somewhere else, towards innovations that alleviate work, enhance efficiency, and improve the quality of work of software application engineers.

Recognitions

This work is the outcome of a multi-year cooperation amongst Google Research study, Google Core Systems and Experiences, and DeepMind. We wish to acknowledge our coworkers Jacob Austin, Pascal Lamblin, Pierre-Antoine Manzagol, and Daniel Zheng, who join us as the essential motorists of this job. This work might not have actually taken place without the considerable and continual contributions of our partners at Alphabet (Peter Choy, Henryk Michalewski, Subhodeep Moitra, Malgorzata Salawa, Vaibhav Tulsyan, and Manushree Vijayvergiya), along with the numerous individuals who gathered information, recognized jobs, developed items, planned, evangelized, and assisted us carry out on the numerous aspects of this program (Ankur Agarwal, Paige Bailey, Marc Brockschmidt, Rodrigo Damazio Bovendorp, Satish Chandra, Savinee Dancs, Matt Frazier, Alexander Frömmgen, Nimesh Ghelani, Chris Gorgolewski, Chenjie Gu, Vincent Hellendoorn, Franjo IvanÄiÄ, Marko IvankoviÄ, Emily Johnston, Luka Kalinovcic, Lera Kharatyan, Jessica Ko, Markus Kusano, Kathy Nix, Sara Qu, Marc Rasi, Marcus Revaj, Ballie Sandhu, Michael Sloan, Tom Small, Gabriela Surita, Maxim Tabachnyk, David Tattersall, Sara Toth, Kevin Villela, Sara Wiltberger, and Donald Duo Zhao) and our very encouraging management (MartÃn Abadi, Joelle Barral, Jeff Dean, Madhura Dudhgaonkar, Douglas Eck, Zoubin Ghahramani, Hugo Larochelle, Chandu Thekkath, and Niranjan Tulpule). Thank you!